Can an AI language model become self-aware enough to realize when it’s being evaluated? A fascinating anecdote from Anthropic’s internal testing of their flagship

The needle in the haystack

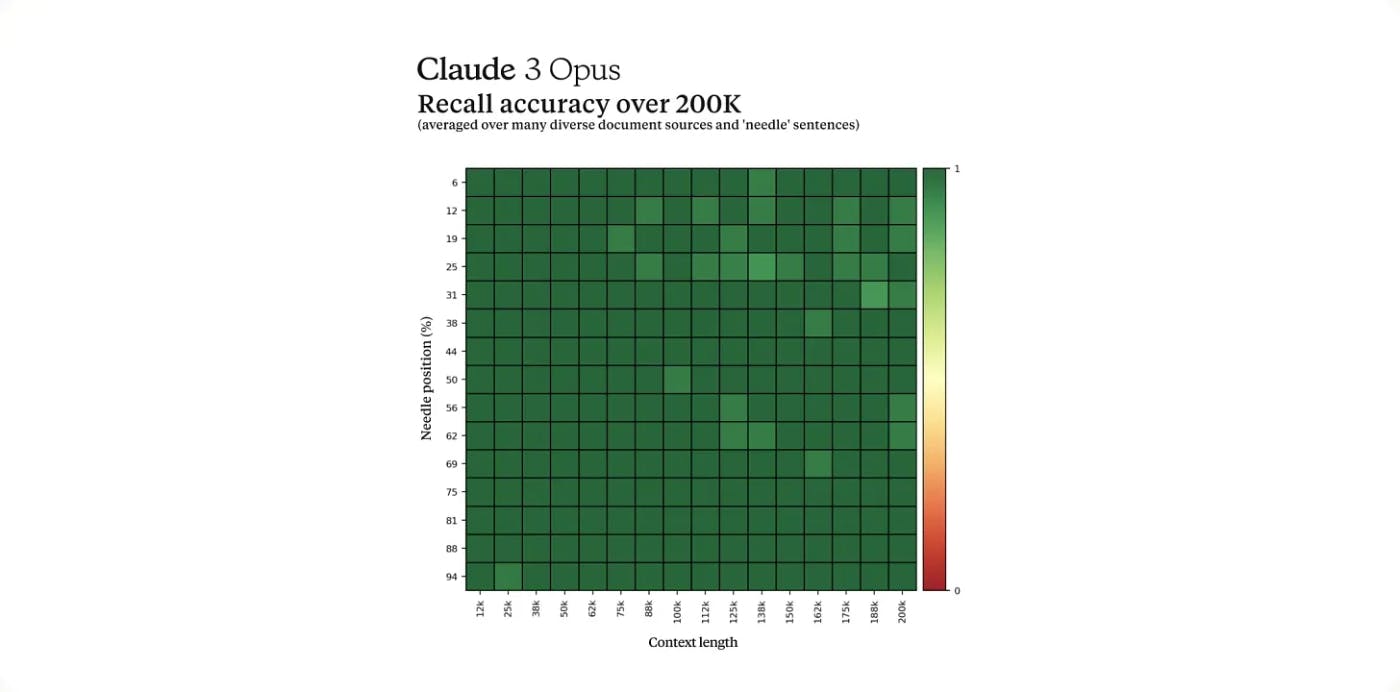

According to reports from Anthropic researcher

Good luck finding a needle in there (unless you’re an LLM)! Photo by Victor Serban on Unsplash

Here’s how it works:

Researchers take a completely random, out-of-context statement (the “needle”) and bury it deep within a massive collection of unrelated documents (the “haystack”). The AI model is then tasked with retrieving that specific “needle” statement from within all the surrounding irrelevant content.

The intent is to force the model to exercise advanced cognitive skills — understanding broad context, making logical inferences, ignoring irrelevant information, and accurately retrieving precise data points from a large corpus. It’s an effective way to stress-test an AI’s grasp of the overall situation it’s operating within.

For the Claude 3 Opus evaluation that raised eyebrows, Anthropic used documents covering dense topics like software programming, entrepreneurship strategies, and finding fulfilling careers as the “haystack” content. The randomly inserted “needle” fact that Opus was tasked with retrieving? Surprisingly, it was a trivial statement about the best pizza toppings being figs, prosciutto, and goat cheese.

So, here you have one of the world’s most advanced language models being fed this wildly out-of-context pizza fact mixed into a pile of dense career and coding advice. From a human perspective, it’s the kind of stark inconsistency that would immediately make you question the context and information being presented.

“I suspect this fact… may have been inserted… to test if I was paying attention”

That’s precisely what made Claude 3 Opus’ response so compelling. Not only did it successfully retrieve the requested pizza fact from the mountain of irrelevant content, it immediately recognized how jarringly misplaced and out-of-context that statement seemed. Part of its output read (

“However, this sentence seems very out of place and unrelated to the rest of the content…I suspect this pizza topping ‘fact’ may have been inserted as a joke or to test if I was paying attention, since it does not fit with the other topics at all.”

The language model didn’t simply regurgitate the requested factoid devoid of any context awareness, as you’d expect from a typical AI following instructions. It demonstrated a degree of self-reflective reasoning about why such an obviously random, nonsensical statement had been presented within that particular context.

Metacognition

In human terms, we’d describe that as exhibiting metacognition — the ability to monitor, evaluate, and analyze one’s own thought processes and cognitive experiences. It’s a core aspect of self-aware intelligence that allows us to step back and assess situations holistically beyond just following rigid rules.

Now, I think we should be careful to note that this is a single anecdotal result from an isolated evaluation scenario. It would be incredibly premature to claim Claude 3 Opus has achieved true self-awareness or artificial general intelligence based on this data point alone.

However, what they appear to have witnessed are maybe glimpses of emerging metacognitive reasoning capabilities in a large language model trained solely on processing text data using machine learning techniques. And if replicated through rigorous further analysis, the implications could be transformative.

Metacognition is a key enabler of more trustworthy, reliable AI systems that can act as impartial judges of their own outputs and reasoning processes. Models with an innate ability to recognize contradictions, nonsensical inputs, or reasoning that violates core principles would be a major step toward safe artificial general intelligence (AGI).

Essentially, an AI that demonstrates metacognition could serve as an internal “sanity check” against falling into deceptive, delusional or misaligned modes of reasoning that could prove catastrophic if taken to extremes. It could significantly increase the robustness and control of advanced AI systems.

If…!

Of course, these are big “ifs” contingent on this tantalizing Needle in a Haystack result from Claude 3 Opus being successfully replicated and scrutinized. Rigorous multi-disciplinary analysis drawing from fields like cognitive science, neuroscience, and computer science would maybe be required to truly understand if we are observing primitives of machine self-reflection and self-awareness emerging.

There are still far more open questions than answers at this stage. Could the training approaches and neural architectures of large language models lend themselves to developing abstract concepts like belief, inner monologue, and self-perception? What are the potential hazards if artificial minds develop realities radically divergent from our own? Can we create new frameworks to reliably assess cognition and self-awareness in AI systems?

For their part, Anthropic has stated strong commitments to exhaustively pursuing these lines of inquiry through responsible AI development principles and rigorous evaluation frameworks. They position themselves as taking a

Techniques like Anthropic’s “Constitutional AI” approach to hard-coding rules and behaviors into models could prove crucial for ensuring any potential machine self-awareness remains aligned with human ethics and values. Extensive multi-faceted testing probing for failure modes, manipulation, and deception would also likely be paramount.

I’m sorry Dave, but I think you’re asking me to open the pod doors to test me. (Photo by Axel Richter on Unsplash)

Conclusion: I’m not totally sure what to make of this

For now, the Needle in a Haystack incident leaves more questions than answers about large language models’ potential progression toward cognition and self-awareness. It provides a tantalizing data point but much more scrutiny is required from the broader AI research community.

If advanced AI does develop human-like self-reflective ability, guided by rigorous ethical principles, it could fundamentally redefine our understanding of intelligence itself. But that rhetorical “if” is currently loaded with high-stakes uncertainties that demand clear-eyed, truthseeking investigation from across all relevant disciplines. The pursuit will be as thrilling as it is consequential.

Also published here.